Is It Time to Regulate AI? (WSJ)

Artificial intelligence is fast becoming a part of being both a consumer and an employee. Apply for a credit card or mortgage and many banks will use AI to weigh your creditworthiness. Apply for a job and the employer could use AI to rank your application. Call a customer-service number and AI technology might screen and route your call.

[…]

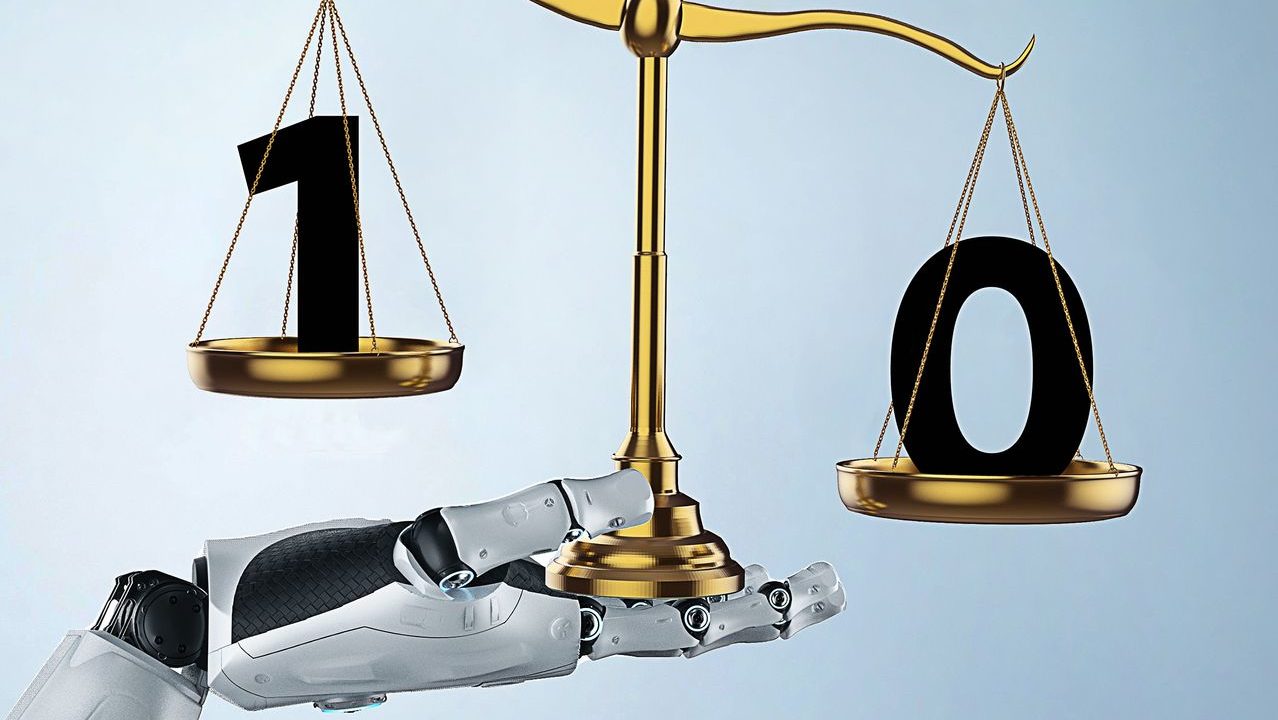

WSJ: Do we need greater regulation of artificial intelligence? Or just government guidance that would lead the industry to regulate itself?

MR. CRENSHAW: Artificial intelligence has the potential to significantly improve society, like securing our networks, preventing fraud, expanding financial inclusion and helping medical researchers develop treatments quicker. At the same time, every iteration of technology has risks.

If we rush to outright ban or overregulate AI, we will delay or won’t realize these new societally beneficial uses. There may come a time where we find we need to regulate AI—as is the case with privacy—but we should really thoughtfully approach this issue before rushing to regulate.

This is one of the reasons why the U.S. Chamber of Commerce launched its new AI Commission on Competitiveness, Inclusion and Innovation, to study how best to approach AI from a regulatory perspective by hearing directly from all relevant stakeholders, including consumer advocates, business and academia. This commission will release a report this fall.

WSJ: How should federal regulation, if warranted, be put in place? Should there be a central agency to oversee regulation of AI? Or should each government agency devise policies in its own sphere of influence, such as financial services or healthcare or housing?

MR. CRENSHAW: Before we determine which agencies are involved in regulating AI, I think an important point needs to be addressed—what is the legal definition of artificial intelligence and machine learning. When we do that, it will be possible to determine whether or not a sectoral or general approach is needed.

WSJ: What specific regulations are warranted? Require businesses to disclose to a regulatory agency their methodology and data used to “train” their AI systems? Allow regulators to run tests of AI algorithms to look for bias or other concerns? Require companies to explain to customers how AI is used?

MR. CRENSHAW: If the government decides that regulation is warranted, agencies should focus on processes that pose a high risk of consumer harm. Policy makers should avoid scenarios in which companies continually have to go to an agency like the FTC to obtain clearance before using their algorithms that have a low risk of harm.

An example where things can be helpful is what we’ve seen at the National Institute of Standards and Technology, where public-private partnerships can assist in the validation of AI like the facial-recognition vendor test. This allows organizations to have their work verified and validated. We have to be careful to prevent intellectual-property theft, though.

WSJ: What privacy risks are posed by business use of AI? How can these concerns be mitigated?

MR. CRENSHAW: Luckily, from a privacy perspective there are steps that can be taken to ensure consumers have control over how personal information is used, collected and shared. Virginia’s new law going into effect next year prohibits the processing of sensitive data dealing with things like race or health diagnoses without consumer consent. Virginia enables some exceptions for societally beneficial uses of data.

There has to be some balance, though, in terms of allowing the use of data for good. For example, sensitive data may be necessary to target traditionally underserved communities and help get them access to credit. AI could also be used to synthesize clinical trials.

There need to be safeguards put in place to ensure data can be used in a secure manner to protect society while promoting privacy.

WSJ: What downsides could AI regulation present? Could it stifle innovation that might benefit consumers and the economy? How could this be mitigated?

MR. CRENSHAW: The U.S. is in a race right now regarding artificial intelligence that will in many ways dictate which country leads economically. If we have 50 states passing privacy or AI regulation, that puts us at a distinct disadvantage against our competitors.

There is also more of a tolerance for risk in the development of technology in China than in the U.S. We need a uniquely American framework that puts a high priority on protecting consumers and innovation. We shouldn’t look to moratoriums or banning technology outright. We should seek to encourage the responsible and ethical use of this technology.

The Weekly Download

Subscribe to receive a weekly roundup of the Chamber Technology Engagement Center (C_TEC) and relevant U.S. Chamber advocacy and events.

The Weekly Download will keep you updated on emerging tech issues including privacy, telecommunications, artificial intelligence, transportation, and government digital transformation.